I was ten minutes into OpenAI’s livestream when the screen cut to an absurdly detailed bowl of rice and one grain had the model’s name on it. You felt the room hold its breath. That tiny, deliberate joke said more about ambition than a slide deck ever could.

I’ve watched these product moments before, and you should treat this one like a test you’re grading—strictly. OpenAI is asking you to forget Nano Banana Pro and come back to ChatGPT, and the company has stacked the demo to make that ask hard to ignore.

The demo served a bowl of rice and a promise

The livestream used an old advertising trick: a small, memorable detail to sell a big claim. OpenAI’s promo called Images 2.0 a Renaissance, and Sam Altman compared the leap to moving from GPT-3 to GPT-5 in one go.

There are two operating modes. Instant is the sped-up image generator now rolling out to ChatGPT and API users. Thinking is gated to paid tiers—Plus, Pro, Business—and it behaves differently: it can fetch live web context, generate multiple distinct outputs from a single prompt, and run internal checks on its own results.

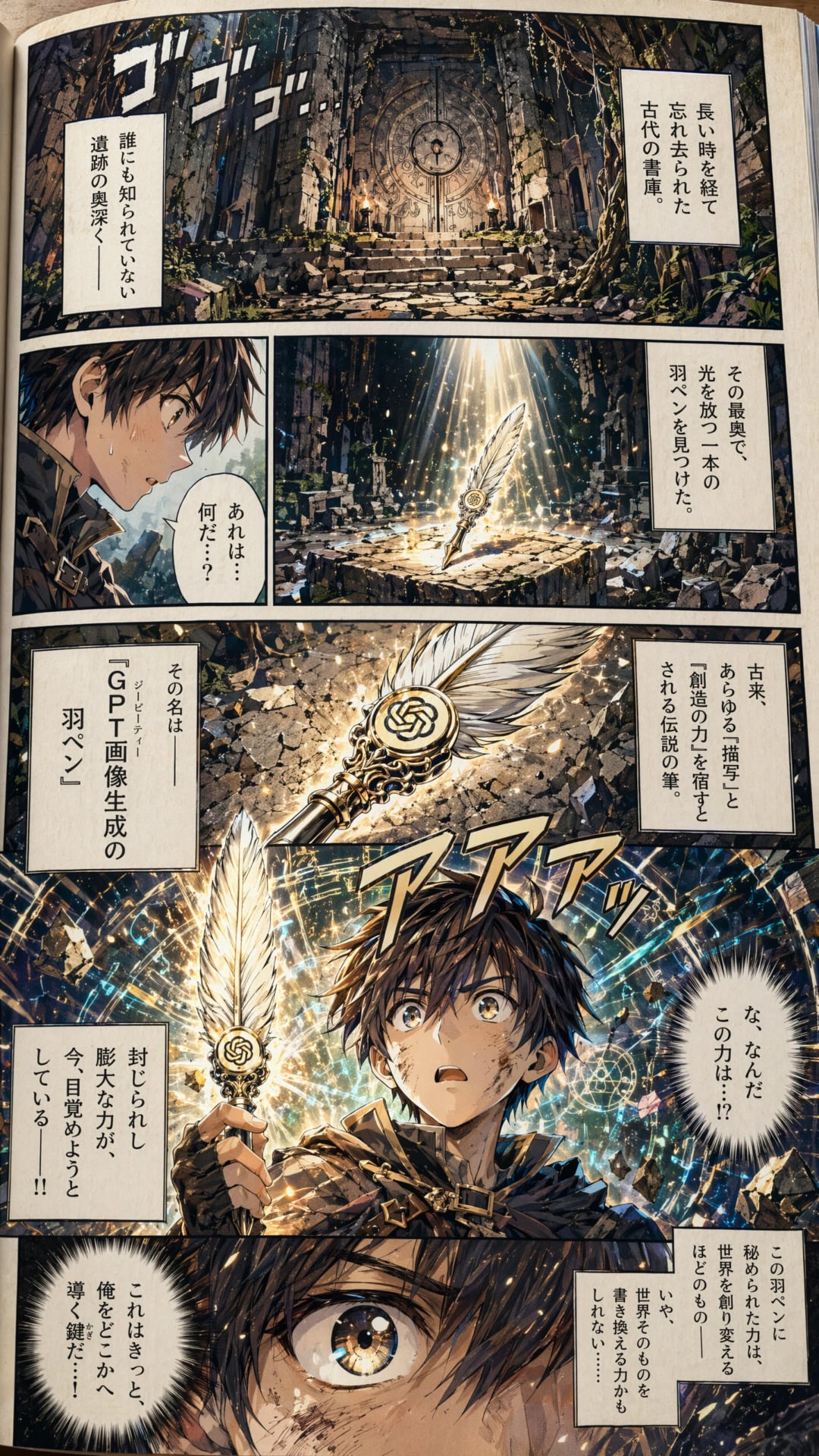

The company also highlighted multilingual prompts, finer visual intelligence, and rare typos. In the demo, Thinking mode produced a bowl of rice with a single grain labeled with the model name, and a manga sequence that kept characters consistent across pages. I’ll be honest: the trick felt, at times, like a magician shuffling the deck—impressive, but you want to know how the trick works.

What is ChatGPT Images 2.0?

Images 2.0 is OpenAI’s next-gen image system inside ChatGPT and the API. You can use a quick Instant mode for basic image generation, or pick Thinking to get sequencing, cross-frame coherence, and web-aware verification. Think of Instant as fast drafts and Thinking as a collaborative artist that checks facts and keeps storylines coherent.

Early testers left breadcrumbs across forums

Random Reddit and X posts had already given away pieces of the machine: code names, Arena AI tests, and demo images. Enthusiasts labeled it GPT-image-2 and flagged tests under handles like maskingtape-alpha.

Those leaks were mostly flattering, but reporters and hobbyists found obvious slip-ups too—a generated world map with fictional countries and capitals shoved into wrong continents. OpenAI’s Thinking mode claims to reduce those sorts of fabrication by checking web facts before finalizing outputs, which is a practical answer to a recurring problem in image models.

That impulse to verify matters because photorealism is the obvious viral target: Gabriel Goh told the livestream he’s most excited about photoreal work because it “triggers something very interesting.” If the model can make believable candid photos, it will create moments that spread fast across social feeds. At its best, that kind of spread can act as a lighthouse in a fog—guiding users back to a single product name.

How does Images 2.0 compare to Nano Banana Pro?

Google’s Nano Banana Pro and Gemini 3 set a high bar late last year; OpenAI’s response has been urgent. The company declared “code red” internally, updated Codex, and trimmed projects like Sora to tighten the story for investors.

Anthropic’s agentic models—Claude Cowork and Claude Code—are pushing OpenAI from the side, and partners like Nvidia’s Jensen Huang have voiced public concern about market dominance. Images 2.0 is a response on multiple fronts: product competitiveness, user retention, and PR momentum. If it sparks a photoreal viral moment akin to last year’s Studio Ghibli craze, it could nudge weekly active users from 900 million toward that billion mark everyone loves to quote.

Financial theater and a reputation to manage

OpenAI is reportedly prepping for an IPO as soon as this year, and that changes incentives. The firm has shifted structure to a for-profit public benefit corporation and is trimming products to tidy up its finances.

Images 2.0 is both product and signal: a way to grow engagement, headline viral moments, and give investors a narrative about growth and differentiation from Google and Anthropic. You should read the release with one eyebrow raised: history shows flashy demos can move numbers, but investors will want sustained metrics, not just memeable images.

Will Images 2.0 help OpenAI’s IPO?

Maybe. A viral photoreal hit could be the kind of momentum investors like, especially if weekly active users climb past public milestones. But the real test is whether the Thinking mode’s verifications reduce hallucinations at scale and whether OpenAI can monetize these features in a way that satisfies profit-minded shareholders.

I think you should watch what the model does in the wild, not only what it does on stage. The rice trick was smart; the question now is whether Images 2.0 will keep delivering smart moves or just rehearsed theatrics for a headline—what do you think?