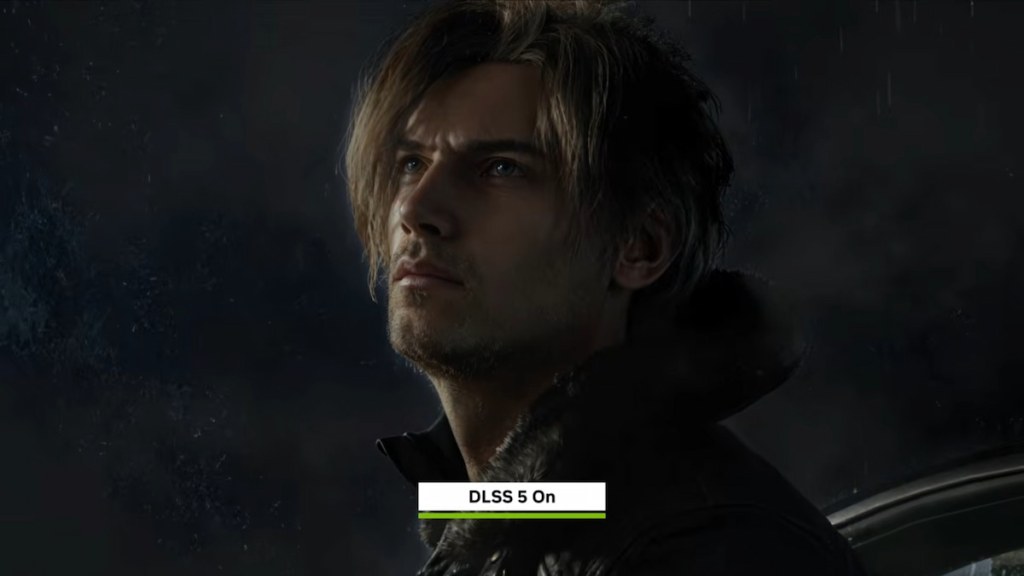

I was at GDC, watching a demo, when the face on-screen stopped being a character and became a curiosity. I blinked, then felt that small, sour disappointment when a trick is passed off as craft. You probably felt it too when you saw Grace and Leon turned into that same flat, filtered version of humanity.

I’ve followed GPU tech for years, and you learn to read hype without swallowing it. So when Nvidia rolled out DLSS 5 and Digital Foundry described it as “using machine learning to leapfrog generations of GPU hardware,” my instinct was to test the sales pitch against the pixels. You should know what this technology does to faces — because it matters more than marketing wants to admit.

At a trade show demo: a famous face becomes a filter

That image of Resident Evil Requiem’s Grace Ashcroft, and the model Julia Pratt, didn’t look like either of them. It looked like a mass-produced social ad you swipe past. I sat there, and the more I looked, the more the faces emptied out — expression traded for smoothness, personality flattened into uniform lighting.

DLSS 5, as Nvidia presented it, replaces traditional lighting with a model driven by color data and motion vectors. The pitch: smarter rendering using machine learning on current hardware. The result shown in the demo was something else: lifeless faces that read as filtered and generic. It’s a reminder that algorithmic shortcuts can erase the subtleties artists spend years learning.

What is DLSS 5 and how does it work?

DLSS 5 is Nvidia’s latest attempt to use neural networks to reconstruct frames, leaning on motion vectors and color buffers rather than full ray-traced light bounces. Nvidia says this lets older hardware mimic newer lighting budgets. Digital Foundry called it ambitious; I call it experimental and uneven when it comes to human faces.

On camera: the uncanny valley keeps showing up

When you compare the original character models with Nvidia’s demo frames, something familiar appears: that same uncanny, AI-generated face we’ve seen on social feeds. The eyes are too glossy, the skin too uniform — like a glossy Snapchat filter over an oil painting.

It’s not just cosmetic. Faces communicate intent, history, pain, humor. When rendering strips micro-details and replaces them with learned approximations, you lose those cues. For games that rely on performance capture and emotional beats, that loss is not merely aesthetic; it changes storytelling.

Will DLSS 5 come to my GPU?

Nvidia plans DLSS 5 for the RTX 50-series later this year and demonstrated it with titles like Requiem and Starfield. For most players, adoption will depend on hardware cycles and developer support — so your experience may not match the glossy demos until patches and driver updates arrive.

In the studio: artists are quietly worried

I spoke with a friend who’s worked on character lighting. He said the same thing I did when seeing the demo: “It’s efficient, but it eats nuance.” You can hear that worry in the forums and private chats: will machine learning replace craft, or just mimic it poorly?

There’s an economic angle too. Nvidia and other companies are pouring resources into cloud-based training and inference. Those data centers don’t run cheap — specialized GPUs and racks can push costs into the thousands. Think of $3,000 (€2,760) or more per accelerator when you factor hardware and overhead. That pricing funnels power to large studios and platform holders, not indie teams.

At the keyboard: what you should watch for

When a new rendering tech is hyped, watch these things: comparisons using side-by-side footage, dev tools showing when ML replaces artist-driven passes, and post-launch patches that refine character work. I follow Digital Foundry for technical breakdowns and dev blogs for human context — those are the places that separate marketing from reality.

If you care about performance and fidelity, demand test footage that isolates lighting, skin shaders, and motion capture. Ask developers how DLSS 5 interacts with performance capture pipelines and whether it preserves artist intent. If a demo flattens nuance, call it out; criticism drives better implementations.

Graphics will keep changing — sometimes for the better, sometimes like a museum display where characters look as if they were wax statues under floodlights. We can debate whether that tradeoff is worth lower hardware requirements and new performance tricks, but we should do it with clear comparisons and honest language.

Are you comfortable letting machine learning decide what a character’s face should feel like?