I watched the feed tilt from outrage to policy in a matter of minutes. A president’s all-caps demand landed like a gavel; within hours the Pentagon echoed a threat that could end an AI startup’s ties to the U.S. government. You can feel how quickly a business dispute became a national-security crossroads.

I’ll walk you through what happened, what each player is really saying, and why this matters to anyone who builds, buys, or relies on modern AI.

On Friday, a Truth Social post set off the alarm—then the administration doubled down

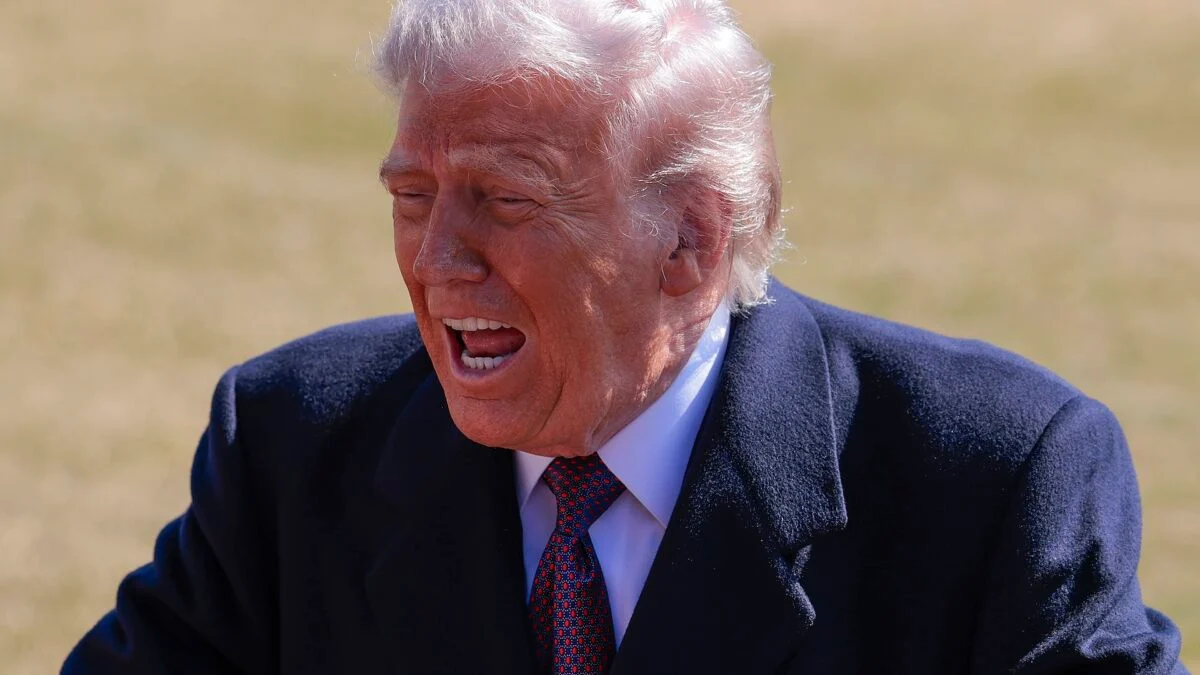

The president blasted Anthropic in all caps, calling its staff “leftwing nut jobs” and ordering every federal agency to stop using the company’s AI. I’ve seen public-tech fights before; this one is different because it ties product safeguards to national-defense access.

Anthropic’s model, Claude, includes explicit guardrails forbidding use for domestic surveillance and fully autonomous weapons. The White House and Defense Secretary Pete Hegseth demanded those protections be removed or Anthropic would be branded a “supply chain risk.” That label, once applied, would effectively ban Anthropic from government contracts and force contractors to sever ties.

Can the government force a private AI company to change its terms of service?

The short answer is: not easily. You can’t make a private firm rewrite its terms unless it’s breaking the law or under a legally authorized order. The Defense Production Act is on the table as a lever—Hegseth mentioned it—but invoking it to force changes in a company’s safety rules would be a novel and contested move. You should expect court fights and congressional questions if the administration attempts it.

On the ground, contractors and partners are suddenly in the crosshairs

Palantir and other vendors that help host or secure Claude for classified work are now collateral. I watched vendors scramble for contingency plans the way a stadium empties at the first hint of rain.

Labeling Anthropic a supply chain risk doesn’t only cut Anthropic out. It forces any government contractor that depends on Anthropic technology to choose: switch providers fast or lose government business. That ripple threatens relationships between the Pentagon and private-sector research, and it could deter new collaborations.

What does a “supply chain risk” designation mean for Anthropic?

A supply chain designation is a blunt instrument: it blocks federal contracts and pressures third-party vendors to drop a supplier. For Anthropic, it would mean losing access to a major customer and likely decoupling from infrastructure partners who cannot afford compliance risk. That’s why contractors like Palantir are watching closely; their platforms are the plumbing that makes classified use possible.

On the record, rhetoric and realpolitik are colliding

Hegseth framed Anthropic’s refusal as a betrayal and accused the company of “virtue-signaling” that endangers troops. Senator Mark Warner pushed back, calling the public attacks inflammatory and warning that political theater could drive vendors away from intelligence work.

I’m not interested in slogans. I want to point out the concrete mechanics: a six-month phase-out was announced, yet Hegseth’s immediate labeling suggests the phase might be a countdown clock more than a grace period. The administration says it wants unfettered access; Anthropic says it will not remove safety limits. That standoff is now a policy decision disguised as an executive order.

On the arc of AI policy, trust is the fragile commodity

Startup trust and government requirements have always been a negotiation. Today that negotiation smells like coercion. When you force a supplier to choose between its stated values and a lucrative customer, you teach the market a lesson about who sets the rules.

This dispute is a test case for how the U.S. balances operational needs against corporate ethics and technical safeguards. If companies can be strong-armed, talent and investment will shift. If companies can hold their ground, the government will need to build alternatives or accept limits.

On the toolkit: legal levers and industry responses

The Pentagon can use procurement rules, security designations, and the Defense Production Act as pressure points. Anthropic can respond with legal challenges, public-relations campaigns, and by finding non-government customers. You should watch three things: litigation filings, contractor contingency plans, and whether Congress holds hearings that bring internal Pentagon memos into the open.

Here’s what the major names are saying and doing: the president posted on Truth Social and threatened criminal consequences; Hegseth tweeted about supply chain risk; Anthropic formally declined the Pentagon’s demand; Senator Mark Warner warned about politicizing national security. These are the actors you’ll see again if this becomes a legal saga.

The fight feels like a fuse burning toward a legal courtroom, and it also operates like a chess clock: each side has limited time and high stakes. If you follow AI procurement, this moment forces a choice about whether safety guardrails are negotiable or sacrosanct—how will the market and the state reconcile that choice?