I saw the App Store leaderboard refresh at 3 a.m. and Claude leapfrogged ChatGPT into first place. You could feel the momentum: downloads chanting their own verdict while a policy fight went public. The catalyst was simple and sharp — Anthropic said no to a Pentagon request to remove safety guardrails.

I want to walk you through what happened, why downloads spiked, and what the OpenAI–Pentagon deal means for you and the wider AI ecosystem.

On Anthropic’s blog, leaders published a direct, public refusal — ‘We cannot in good conscience accede to their request’

Anthropic posted a short but consequential statement: in a narrow set of cases, AI can undermine democratic values. They said defense officials wanted Claude stripped of safety guardrails so the model could be used for mass domestic surveillance and in fully autonomous weapons systems. Facing threats of being labeled a “supply‑chain risk” or pressured under the Defense Production Act, Anthropic stuck to its line: they would not remove protections.

The moment felt like a lighthouse in a storm — a single, visible refusal that redirected attention and downloads.

Anthropic’s public stance also triggered a political response. President Trump ordered federal agencies to “immediately cease” using Anthropic’s tools and called the company “radical left.” That escalation moved the fight from a corporate blog post to the center of national debate.

Why did Claude shoot to No. 1 on the App Store?

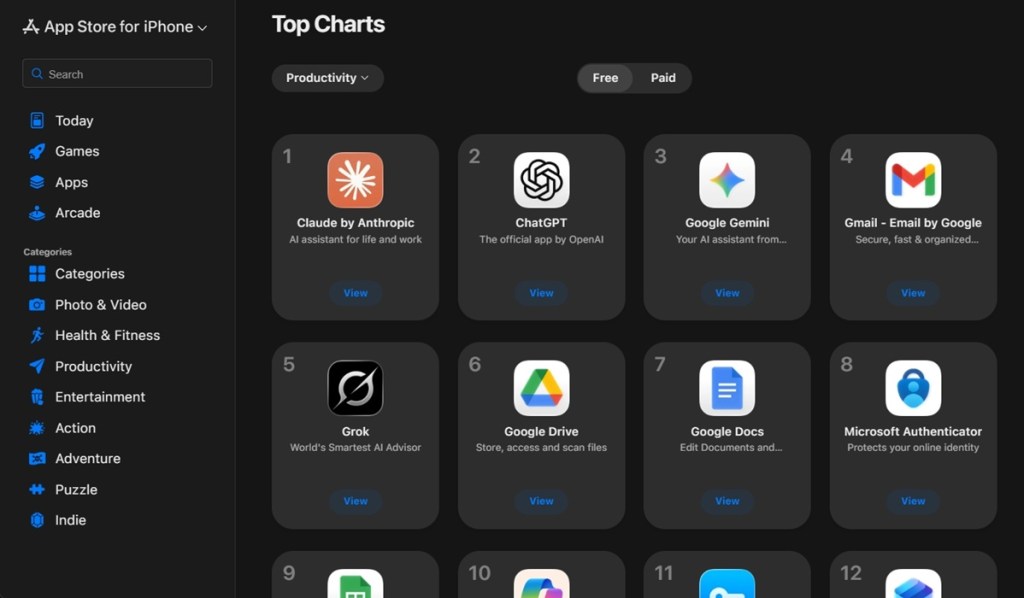

You’re asking the right question: downloads are votes, and Claude got a tidal wave. On February 28, Anthropic recorded roughly 503,424 installs in a single day — its biggest day yet — pushing Claude to the top of the Free Productivity apps chart on Apple’s US App Store.

Several forces converged. Users angry with OpenAI’s Pentagon agreement searched for alternatives. Social trends like “Cancel ChatGPT” amplified the churn. And media coverage turned Anthropic’s refusal into a rallying point for people who want their AI with visible safety constraints.

At the Pentagon, officials quietly weighed operational needs against guardrails — OpenAI signed a deal to deploy models on classified networks

OpenAI announced on X that it reached an agreement to run its models on the Department of Defense’s classified systems. Sam Altman framed the deal as a way to meet national-security needs while prohibiting domestic mass surveillance and autonomous weapon systems. Critics, however, point to vague contract language — phrases like “unconstrained monitoring” and “for all lawful purposes” — as potential loopholes.

The switch looked like trading a knight for a rook in a chess match: tactical gains on one board, strategic risks on another.

OpenAI’s contract with the Pentagon has already generated a social blowback. “Cancel ChatGPT” trended as users investigated alternatives, and the company now faces questions about where its loyalties lie — to users, to government customers, or to both.

Will users abandon ChatGPT over the Pentagon deal?

People are voting with downloads, but migration is messy. A trending hashtag or a surge in installs doesn’t guarantee long-term defections: product stickiness, integrations with tools like Gmail, Slack, or enterprise APIs, and platform trust all matter. Still, when a core audience sees a company sign a classified contract, churn is a real risk.

If you use ChatGPT for personal or business work, watch for changes to data handling, enterprise terms, and any shifts in feature availability tied to classified deployments. Alternatives on your radar should include Anthropic’s Claude and Google’s Gemini, plus emerging specialists that emphasize on-device processing or stricter guardrails.

I’ve followed AI policy fights where a single public refusal reorders markets, users, and regulators — and this feels like one of those inflection points. You’ll want to watch how Apple’s store rankings move, how OpenAI’s wording is interpreted by privacy advocates, and whether regulators press for clearer limits on government access to large models.

So which matters more to you: a model that accepts every government request, or one that says no and trusts users to judge?