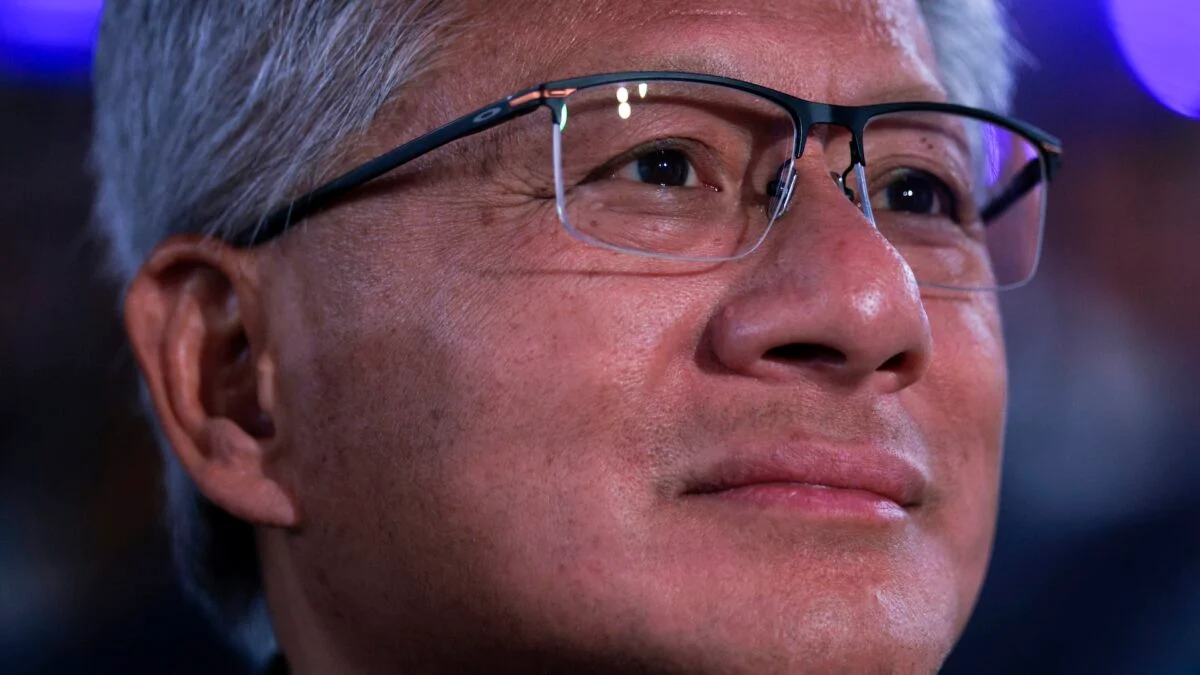

The lights went dim on what had been billed as a transformational partnership. Jensen Huang, speaking calmly onstage, left no room for doubt: the $100 billion plan is probably not happening. You can feel how quickly a headline turns into a strategic problem when money, chips and public markets collide.

I’ve followed deals like this for years, and you should too—because when giants change course, the ripples hit infrastructure, recruiting and the chips in every cloud rack. Here’s what I think happened, and what you should watch next.

On stage at the Morgan Stanley conference, Jensen Huang said the full $100 billion likely won’t happen.

Nvidia already committed $30 billion (€28 billion) as part of a larger $110 billion (€101 billion) OpenAI funding round that included $50 billion (€46 billion) from Amazon and $30 billion (€28 billion) from SoftBank. Huang’s comment—made in public, with investors listening—recast the $100 billion ($100,000,000,000 / €92,000,000,000) figure announced in September as aspirational rather than guaranteed.

That matters because the original pledge was more than cash: it was a plan to build 10 gigawatts of AI data centers, funding each gigawatt as it came online and cycling dollars back into leases for Nvidia silicon. The first gigawatt was expected in the second half of 2026, and companies had begun sizing machines and negotiating capacity on that assumption.

Is the $100 billion Nvidia-OpenAI deal dead?

Short answer: probably. Huang’s public statement echoes Nvidia’s recent earnings language, which called the arrangement “a letter of intent with an opportunity to invest.” Reports from Reuters, the Wall Street Journal and the Financial Times suggested talks stalled and that both sides were publicly downplaying tension while privately reconsidering structure and timing.

In conference rooms and earnings calls, the wording shifted from “deal” to “opportunity.”

When I read the WSJ and Reuters pieces, I saw the same pattern: early-stage megadeals attract optimistic headlines, then scrutiny peels back assumptions. The WSJ reported that Huang privately questioned OpenAI’s business discipline and warned that weaker execution could hurt Nvidia’s sales; Reuters later said OpenAI had concerns about inference performance on some Nvidia chips and blamed hardware for issues in its Codex assistant.

Those reports forced both companies into defensive choreography—denials, conciliatory quotes, and then more guarded financial statements. Nvidia’s November filing called the arrangement a letter of intent; by the most recent earnings report the company refused to promise a completed transaction.

Why did Nvidia pause its $100 billion pledge?

Several forces converged: technical fit, commercial discipline, and timing. OpenAI’s pursuit of an IPO this year changes incentives—public markets and lockup rules make multiyear, multibillion-dollar, circular financing trickier. Nvidia needs confidence that its chips will be used in ways that grow revenue, not merely shuffle financing between the same players. And open reporting suggested performance disagreements over inference on Nvidia hardware.

On conference calls and in analyst notes, the potential collapse felt sudden but predictable.

Here’s how I read it: the initial announcement created a feedback loop that made observers nervous about circular dealmaking—companies signing multibillion-dollar deals with one another repeatedly, buying and leasing capacity in a closed loop. Critics warned it could behave like a house of cards if demand fell short. Now, with OpenAI inching toward an IPO and Nvidia unwilling to commit more capital publicly, the loop looks less stable.

Reports in November and January suggested talks hadn’t progressed beyond preliminary stages. The Financial Times later said the two firms would abandon the deal. The combined signal—public pause, mixed reporting, guarded filings—left the market with a clear message: don’t count on the remaining $70 billion.

Will OpenAI still use Nvidia chips?

Short-term: almost certainly yes—Nvidia dominates GPU performance for training and a lot of inference workloads. But the relationship will be transactional not monopolistic: OpenAI scouts alternatives, Google and Anthropic are competitors, and Amazon’s role in the funding round (and as a cloud provider) complicates incentives. Expect OpenAI to hedge across vendors and cloud providers rather than bind itself to a single hardware supplier.

I’ll give you the practical signals to watch: official language around the IPO timetable; capital commitments written into SEC filings; partnerships announced with cloud providers like AWS; and technical tests on inference latency and efficiency for chips such as Nvidia’s latest accelerators. If you’re tracking vendor risk, pay attention to procurement cycles at major cloud providers and who wins capacity reservations.

One final image: a megadeal collapsing feels as if someone had turned out the lights in a data center—silence, inventory counts, emergency plans. You and I both know the industry will adapt, but the question is which firms reshape the market from the wreckage?