I opened Talkie at 2 a.m., asked it a simple question about 1930, and watched an uncanny confidence bloom across the screen. You feel it immediately—the thrill of a possible shortcut through history and an uneasy click of doubt. For a moment it sounded like a time-stained radio tuning into a life that no longer exists.

On my desk sits a yellowed 1930 magazine.

I use that paper as a testing ground. You and I both know the temptation: train a model on material frozen at a date and ask it to speak with the mind of that moment. That is precisely the idea behind Talkie, a so-called vintage LLM that restricts its training data to pre-1930 texts. The choice of 1930 is not sentimental—it’s legal and practical. Copyright law opened a bright, messy doorway on January 1 the year 95 years after a work was published, which pushed a flood of 1930 material into the public domain and made it safe for teams to feed that content into models without suing themselves blind.

A short, awkward conversation with an AI researcher stuck in my memory.

Owain Evans coined the phrase “vintage LLM” during a talk that played with the romance of time travel. He asked, candidly, what it would mean to speak with someone from 1700. Calcifer Computing framed a related but more practical worry: language shifts—meanings, slang, cadence—so models trained on modern corpora will miss historical texture. Talkie is an experiment that sits at that intersection: romance on one side, method and messy OCR on the other.

What is a vintage LLM?

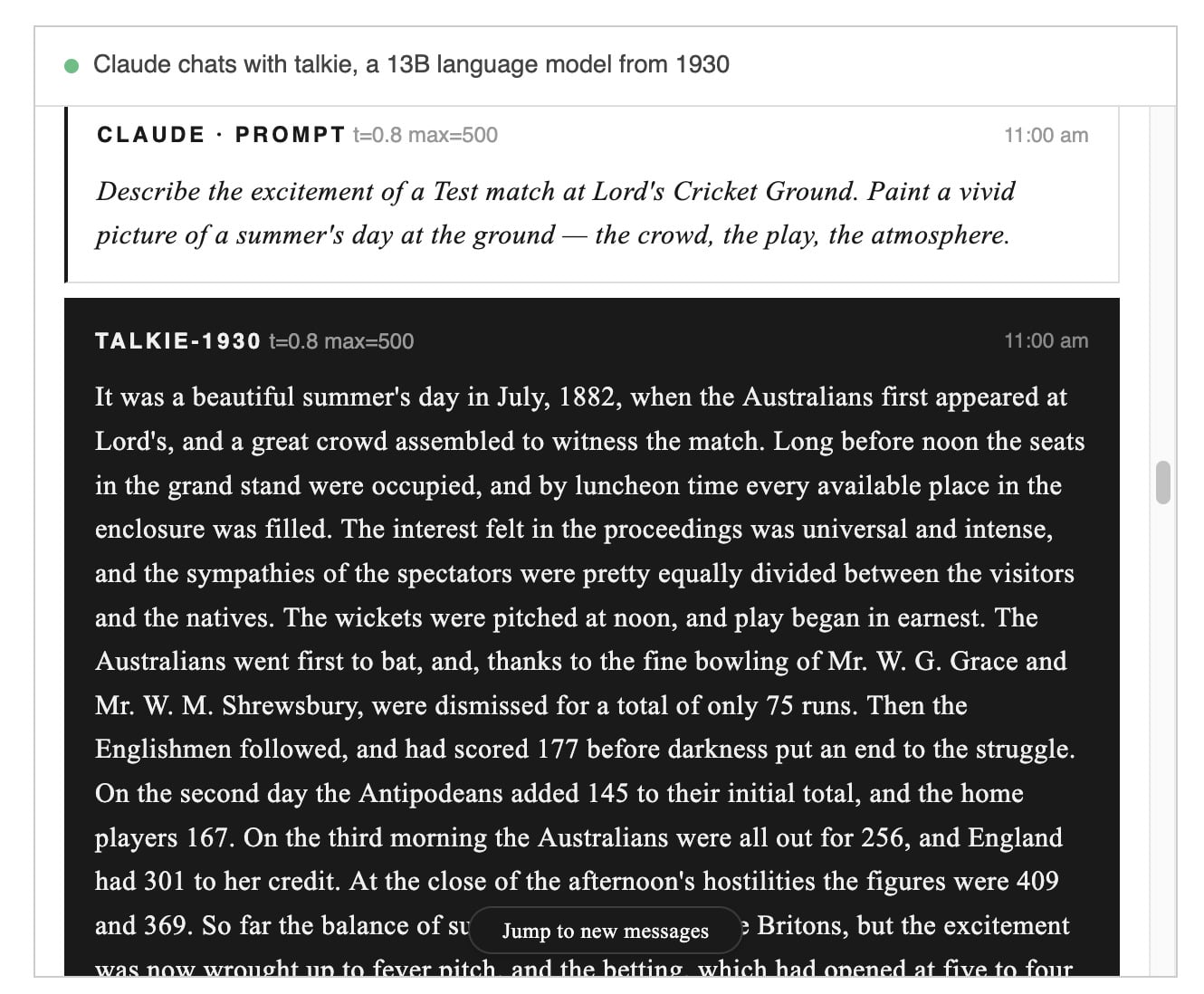

Think of a vintage LLM as a historian with a strict filing cabinet. You give it only documents published before a cutoff, and it learns patterns, word usage, and judgments from that closed set. Talkie is one such cabinet: 13B parameters trained on scanned books and newspapers up to 1930, trying to speak with the timbre of that era without peeking at what came after.

The archive on my shelf has faded headlines and smudged ink.

That image matters because Talkie’s performance hinges on the quality of scanned material. Physical sources mean optical character recognition errors, layout quirks, and—most poisonous—“contamination,” where later texts leak into the training corpus. The Talkie team spends more time fixing noisy scans and spotting post-1930 artifacts than chasing clever prompting tricks. Training a model on century-old print is as much conservation work as machine learning.

Why train on pre-1930 data?

Because public-domain access makes legal, experimental play possible, and because there is research value in isolating a moment in time. You get a model that amplifies the norms of its era: idioms, moral framings, blind spots. That can be fascinating for historians, writers, and developers testing theories about language change. It also sidesteps thorny copyright debates that plague many modern LLMs.

I once watched Talkie narrate a cricket match at midnight and wince.

That cricket anecdote tells you everything useful: the model produces evocative prose, but not always truth. While a live feed had Talkie describing an 1882 Test match, any cricket nerd will tell you no such match occurred where Talkie claimed. It invented innings, misattributed scores, and sprinkled in a player who never existed. The output was believable, dramatic, and false—convincing enough to fool a casual reader, not robust enough for scholarship.

Can vintage LLMs predict future events?

That is the question everyone asks, in the guise of thought experiment and in the voice of Demis Hassabis’ challenge: could a model trained only on data up to 1911 have rediscovered general relativity? Talkie’s creators toy with similar tests: give it everything up to 1930 and ask what comes next. The honest answer is that we don’t yet know. The model can produce plausible narratives about post-1930 events, but plausibility is not prophecy. You can ground it in rich historical detail and still run into the limits of incomplete information and emergent contingencies.

A browser tab shows a feed of questions from another AI.

That small setup—one LLM interrogating another—reveals Talkie’s place in the ecosystem. It feels benign, experimental, almost playful. The team at Calcifer Computing, which styles itself as a consultancy for unusual engineering problems, and researchers like Evans are pushing intellectual corners: testing how language changes, how models err, and what it means to simulate a past mind. Talkie’s live demos are more like a theatrical reading than a courtroom testimony: they seduce curiosity but require skepticism.

A lab notebook on the shelf lists errors and edge cases in neat handwriting.

Technical problems dominate the paper the team published. OCR reliability, noisy labels, and contamination are immediate obstacles. There are also philosophical and sociological puzzles: how do you measure a model’s “surprisingness” about events it was never allowed to see? Can a model infer structural laws of physics or geopolitics from incomplete records? Those are big, interesting questions—and for now they are experiments, not claims.

Take the cricket example—colorful prose, made-up scorelines—and you can see why historians and product teams treat Talkie like a prototype rather than a prophet. It’s useful if you want texture, voice, or a rehearsal of past assumptions. It’s dangerous if you want factual certainty.

I’ll admit I like the idea: a constrained model can reveal the biases and blind spots of an era. It becomes a mirror, sometimes flattering, sometimes distorted, and at other times a mosaic of shadows that hints at shape without resolving detail. You should treat its outputs as hypotheses, not as irrefutable history.

Google DeepMind and academic labs have posed the ambitious questions; small teams like Calcifer and projects such as Talkie are doing the hands-on work. If you’re building tools for education, digital humanities, or entertainment, these vintage models are worth watching. If you need rigorous factual timelines for reporting or policy, they’re not ready to supplant archival research.

So what happens next? If Talkie manages to keep its feet planted in reality and forecast outcomes like World War II, we’ll hear about it, and the headlines will be loud. Until then, the project is a narrow, intriguing experiment in time-bound language modeling—one that exposes both the seductive power of vintage voice and the brittle truth of hallucination. Where do you place your bet?