I remember the moment I first read Anthropic’s warning: a model too sharp to be left loose. You felt that shift, too—an industry skittish and suddenly focused. Now OpenAI has stepped forward with Daybreak, and you should be paying attention.

OpenAI is launching Daybreak, our effort to accelerate cyber defense and continuously secure software.

AI is already good and about to get super good at cybersecurity; we’d like to start working with as many companies as possible now to help them continuously secure themselves.

— Sam Altman (@sama) May 11, 2026

I’m writing as someone who follows these product theater moves closely, and I’ll tell you what I see: Daybreak is OpenAI’s answer to Anthropic’s Project Glasswing, but dressed for daylight. You can feel the psychological pivot—less secrecy, more sign-ups—and that matters.

Governments scrambled after Anthropic’s notice — how Daybreak surfaces from that chaos

When Anthropic pulled Claude Mythos Preview from general release, it forced a conversation about capability and control. I watched the headlines—developers like Daniel Stenberg called it a marketing coup; policymakers took notes; private firms whispered about partnerships.

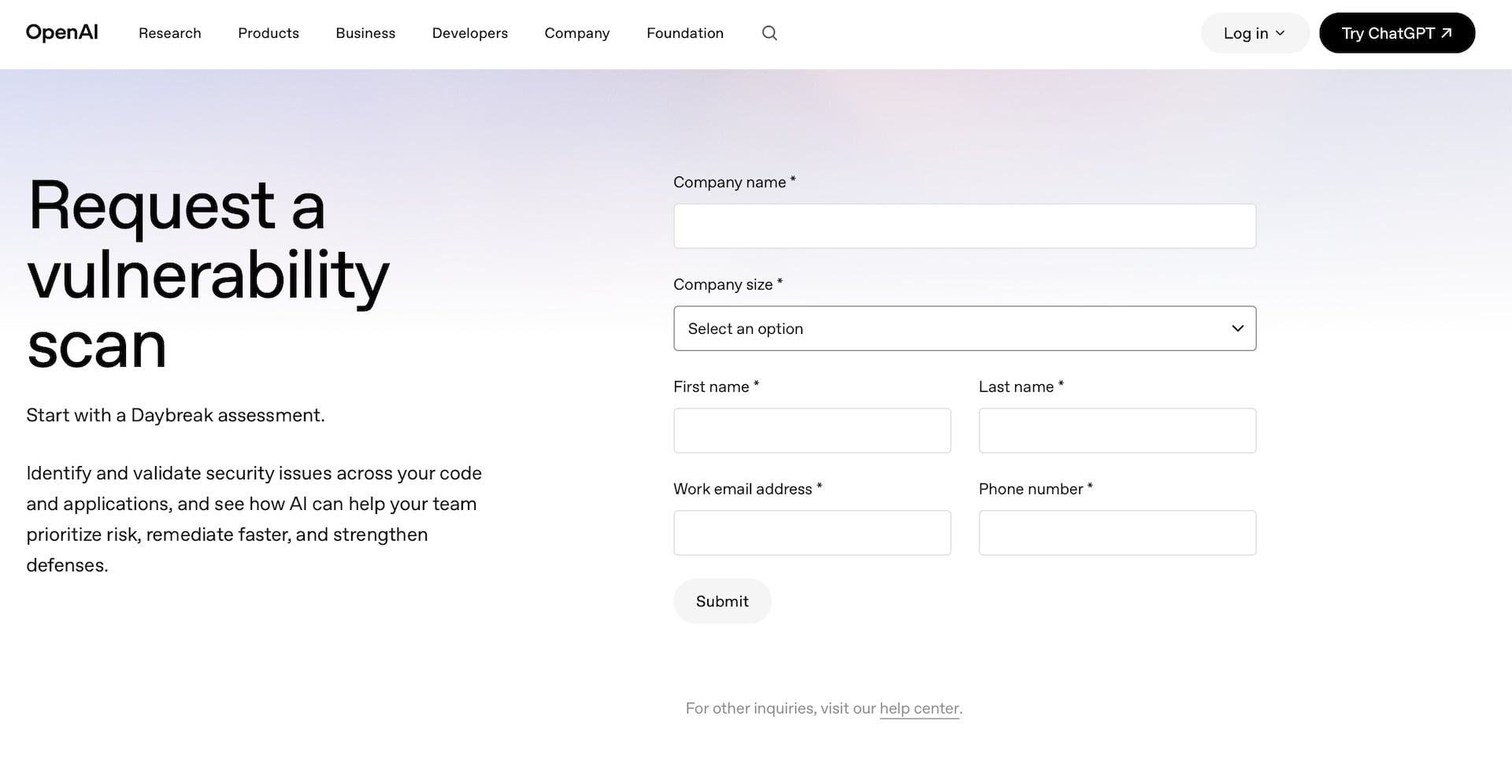

Anthropic framed Mythos as too cyber-capable for the public. Project Glasswing followed: a defensive program, limited partners, and the sense that powerful models needed to be fenced off. Daybreak arrives in that context, but with a different tone. OpenAI’s page invites you to request a vulnerability scan or contact sales, which signals a push toward openness rather than secrecy.

What is OpenAI Daybreak?

Daybreak is OpenAI’s program to pair its advanced models with corporate and government codebases to find vulnerabilities earlier and fix them faster. It builds on Codex Security—announced as a research preview in March—and layers threat modeling on top of code analysis.

A customer clicked “Request a vulnerability scan” — and this is what happened next

I filled out the brief form OpenAI provides; you probably noticed how short it is. That’s intentional: Daybreak’s public face wants broad participation, not elite secrecy.

Contrast that with reports from Axios and the hushed tone around Glasswing. OpenAI’s messaging comes off as a service: scans, threat models, and then remediation. In practice, Daybreak maps functions, trusted actors, and likely failure modes before poking at the code.

How does Daybreak compare to Anthropic’s Project Glasswing?

Glasswing felt locked-down and selective because Anthropic treated Mythos as both a risk and an asset. OpenAI is signaling scale. You still get a limited partnership vibe, but the front door is open: buttons, forms, and sales contacts. That changes who gets access and how transparent the process appears.

Two metaphors: Daybreak tries to act like a lighthouse in fog—spotting hazards without making everything disappear into shadows. It also behaves like a locksmith with a bent key, trying to open what’s broken without shattering the lock.

Developers found a curl vulnerability — and that mattered for everyone

When Daniel Stenberg and others showed Mythos could find an exploitable curl issue, the industry paid attention. You should, too.

That signal did two things: it proved these models can surface real, non-trivial vulnerabilities, and it forced companies to ask whether they wanted that power in-house, in a vendor’s hands, or behind coordinated disclosure programs. Daybreak pitches itself as a partner for that work.

Can AI safely perform vulnerability scans?

Yes, but with caveats. AI can automate threat modeling, enumerate trust boundaries, and flag patterns humans miss—but it also risks false positives, hallucinated exploits, and information exposure. OpenAI’s play is to fold human review and partner agreements into the loop. You’ll still want legal and security teams at the table.

I’ve seen vendors promise protective programs before — here’s what’s different this time

Vendors have offered managed security and red teaming for years; the new variable is model capability. When a frontier model can synthesize attack chains, you gain scale but also new questions: access controls, model outputs in sensitive contexts, and how fixes are applied.

OpenAI says Daybreak uses Codex Security to build the threat model and then inspects real code for exploits—then it patches them. That patching promise is the pivot: it moves the relationship from auditor to remedier, and that introduces product and liability considerations.

So where does this leave you? If you run software, Daybreak is now a visible option to invite automated, model-driven scrutiny. If you advise C-suites, this is a service worth parsing, because access and transparency differ sharply from Anthropic’s limited program.

There will be debates about power, privilege, and control: who sees the vulnerabilities, who fixes them, and who decides when a capability is too risky for public release. You’ll want to ask about data handling, partner lists, and disclosure timelines—because those answers reveal whether Daybreak is a tool or a gatekeeper.

Watch the partnerships list and the partner agreements. If OpenAI wants scale, companies will want controls; governments will want influence. You and I both know those negotiations will shape access and safety more than any blog post.

Are we about to shift from guarded prototypes to widely used cyber-capable models—and if that happens, who holds the map?